SAN FRANCISCO — The school bus was displaying its stop sign and flashing red warning lights, a police report said, when Tillman Mitchell, 17, stepped off one afternoon in March. Then a Tesla Model Y approached on North Carolina Highway 561.

- arrow_back Home

- keyboard_arrow_right On the Road

“It’s very dangerous for motorcycles to be around Teslas”

On the Road Karla Weinbrenner July 25, 2024

17 fatalities, 736 crashes: The shocking toll of Tesla’s Autopilot

Tesla’s driver-assistance system, known as Autopilot, has been involved in far more crashes than previously reported

It struck Mitchell at 45 mph. The teenager was thrown into the windshield, flew into the air and landed facedown in the road, according to his great-aunt, Dorothy Lynch. Mitchell’s father heard the crash and rushed from his porch to find his son lying in the middle of the road.

“If it had been a smaller child,” Lynch said, “the child would be dead.”

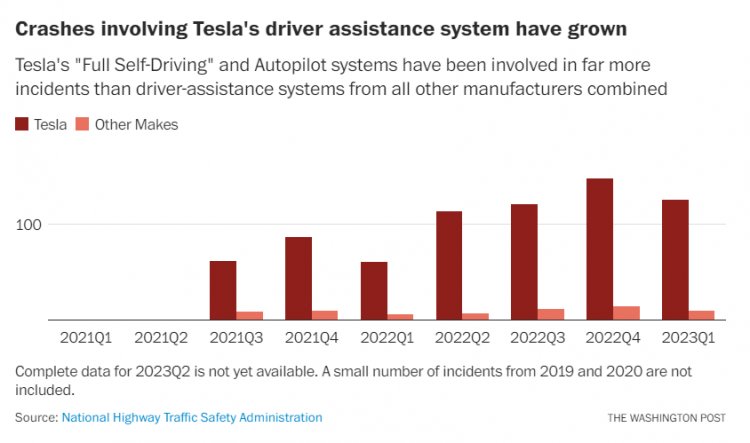

The crash in North Carolina’s Halifax County, where a futuristic technology came barreling down a rural highway with devastating consequences, was one of 736 U.S. crashes since 2019 involving Teslas in Autopilot mode — far more than previously reported, according to a Washington Post analysis of National Highway Traffic Safety Administration data. The number of such crashes has surged over the past four years, the data shows, reflecting the hazards associated with increasing use of Tesla’s driver-assistance technology as well as the growing presence of the cars on the nation’s roadways.

The number of deaths and serious injuries associated with Autopilot also has grown significantly, the data shows. When authorities first released a partial accounting of accidents involving Autopilot in June 2022, they counted only three deaths definitively linked to the technology. The most recent data includes at least 17 fatal incidents, 11 of them since May 2022, and five serious injuries.

Mitchell survived the March crash but suffered a fractured neck and a broken leg and had to be placed on a ventilator. He still experiences memory problems and has trouble walking. His great-aunt said the incident should serve as a warning about the dangers of the technology.

“I pray that this is a learning process,” Lynch said. “People are too trusting when it comes to a piece of machinery.”

Tesla chief executive Elon Musk has said that cars operating in Tesla’s Autopilot mode are safer than those piloted solely by human drivers, citing crash rates when the modes of driving are compared. He has pushed the carmaker to develop and deploy features programmed to maneuver the roads — navigating stopped school buses, fire engines, stop signs and pedestrians — arguing that the technology will usher in a safer, virtually accident-free future. While it’s impossible to say how many crashes may have been averted, the data shows clear flaws in the technology being tested in real time on America’s highways.

Tesla’s 17 fatal crashes reveal distinct patterns, The Post found: Four involved a motorcycle. Another involved an emergency vehicle. Meanwhile, some of Musk’s decisions — such as widely expanding the availability of the features and stripping the vehicles of radar sensors — appear to have contributed to the reported uptick in incidents, according to experts who spoke with The Post.

Tesla and Elon Musk did not respond to a request for comment.

NHTSA said a report of a crash involving driver-assistance does not itself imply that the technology was the cause. “NHTSA has an active investigation into Tesla Autopilot, including Full-Self Driving,” spokeswoman Veronica Morales said, noting that the agency doesn’t comment on open investigations. “NHTSA reminds the public that all advanced driver assistance systems require the human driver to be in control and fully engaged in the driving task at all times. Accordingly, all state laws hold the human driver responsible for the operation of their vehicles.”

Musk has repeatedly defended his decision to push driver-assistance technologies to Tesla owners, arguing that the benefit outweighs the harm.

“At the point of which you believe that adding autonomy reduces injury and death, I think you have a moral obligation to deploy it even though you’re going to get sued and blamed by a lot of people,” Musk said last year. “Because the people whose lives you saved don’t know that their lives were saved. And the people who do occasionally die or get injured, they definitely know — or their state does.”

Former NHTSA senior safety adviser Missy Cummings, a professor at George Mason University’s College of Engineering and Computing, said the surge in Tesla crashes is troubling.

“Tesla is having more severe — and fatal — crashes than people in a normal data set,” she said in response to the figures analyzed by The Post. One likely cause, she said, is the expanded rollout over the past year and a half of Full Self-Driving, which brings driver-assistance to city and residential streets. “The fact that … anybody and everybody can have it. … Is it reasonable to expect that might be leading to increased accident rates? Sure, absolutely.”

Cummings said the number of fatalities compared with overall crashes was also a concern.

It is unclear whether the data captures every crash involving Tesla’s driver-assistance systems. NHTSA’s data includes some incidents in which it is “unknown” whether Autopilot or Full Self-Driving was in use. Those include three fatalities, including one last year.

NHTSA, the nation’s top auto safety regulator, began collecting the data after a federal order in 2021 required automakers to disclose crashes involving driver-assistance technology. The total number of crashes involving the technology is minuscule compared with all road incidents; NHTSA estimates that more than 40,000 people died in wrecks of all kinds last year.

Since the reporting requirements were introduced, the vast majority of the 807 automation-related crashes have involved Teslas, the data shows. Tesla — which has experimented more aggressively with automation than other automakers have — also is linked to almost all of the deaths.

Subaru ranks second with 23 reported crashes since 2019. The enormous gulf probably reflects wider deployment and use of automation across Tesla’s fleet of vehicles, as well as the broader range of circumstances in which Tesla drivers are encouraged to use Autopilot.

Autopilot, which Tesla introduced in 2014, is a suite of features enabling the car to maneuver itself from highway on-ramp to off-ramp, maintaining speed and distance behind other vehicles and following lane lines. Tesla offers it as a standard feature on its vehicles, of which more than 800,000 are equipped with Autopilot on U.S. roads, though advanced iterations come at a cost.

Full Self-Driving, an experimental feature that customers must purchase, allows Teslas to maneuver from Point A to Point B by following turn-by-turn directions along a route, halting for stop signs and traffic lights, making turns and lane changes, and responding to hazards along the way. With either system, Tesla says drivers must monitor the road and intervene when necessary.

The uptick in crashes coincides with Tesla’s aggressive rollout of Full Self-Driving, which has expanded from about 12,000 users to nearly 400,000 in a little more than a year. Nearly two-thirds of all driver-assistance crashes that Tesla has reported to NHTSA occurred in the past year.

Philip Koopman, a Carnegie Mellon University professor who has conducted research on autonomous-vehicle safety for 25 years, said the prevalence of Teslas in the data raises crucial questions.

“A significantly higher number certainly is a cause for concern,” he said. “We need to understand if it’s due to actually worse crashes or if there’s some other factor such as a dramatically larger number of miles being driven with Autopilot on.”

In February, Tesla issued a recall of more than 360,000 vehicles equipped with Full Self-Driving over concerns that the software prompted its vehicles to disobey traffic lights, stop signs and speed limits.

The flouting of traffic laws, documents posted by the safety agency said, “could increase the risk of a collision if the driver does not intervene.” Tesla said it remedied the issues with an over-the-air software update, remotely addressing the risk.

While Tesla has constantly tweaked its driver-assistance software, it also took the unprecedented step of eliminating radar sensors from new cars and disabling them from vehicles already on the road — depriving them of a critical sensor as Musk pushed a simpler hardware set amid the global computer chip shortage. Musk said last year, “Only very high resolution radar is relevant.”

The company has recently taken steps to reintroduce radar sensors, according to government filings first reported by Electrek.

In a March presentation, Tesla claimed that Full Self-Driving crashes at a rate at least one-fifth that of vehicles in normal driving, in a comparison of miles driven per collision. That claim, and Musk’s characterization of Autopilot as “unequivocally safer,” is impossible to test without access to the detailed data that Tesla possesses.

Autopilot, largely a highway system, operates in a less complex environment than the range of situations experienced by a typical road user.

It is unclear which of the systems was in use in the fatal crashes: Tesla has asked NHTSA not to disclose that information. In the section of the NHTSA data specifying the software version, Tesla’s incidents read — in all capital letters — “redacted, may contain confidential business information.”

Both Autopilot and Full Self-Driving have come under scrutiny in recent years. Transportation Secretary Pete Buttigieg told the Associated Press last month that Autopilot is not an appropriate name “when the fine print says you need to have your hands on the wheel and eyes on the road at all times.”

NHTSA has opened multiple probes into Tesla’s crashes and other problems with its driver-assistance software. One has focused on “phantom braking,” a phenomenon in which vehicles abruptly slow down for imagined hazards.

In one case last year, detailed by the Intercept, a Tesla Model S reportedly using driver-assistance suddenly braked in traffic on the San Francisco Bay Bridge, resulting in an eight-vehicle pileup that left nine people injured, including a 2-year-old.

In other complaints filed with NHTSA, owners say the cars slammed on the brakes when encountering semi trucks in oncoming lanes.

Many crashes have involved similar settings and conditions. NHTSA has received more than a dozen reports of Teslas slamming into parked emergency vehicles while in Autopilot, for example. Last year, NHTSA upgraded its investigation of those incidents to an “engineering analysis.”

Also last year, NHTSA opened two consecutive special investigations into fatal crashes involving Tesla vehicles and motorcyclists. One occurred in Utah, when a motorcyclist on a Harley-Davidson was traveling in a high-occupancy lane on Interstate 15 outside Salt Lake City shortly after 1 a.m., according to authorities. A Tesla in Autopilot struck the bike from behind.

“The driver of the Tesla did not see the motorcyclist and collided with the back of the motorcycle, which threw the rider from the bike,” the Utah Department of Public Safety said. The motorcyclist died at the scene, Utah authorities said.

“It’s very dangerous for motorcycles to be around Teslas,” Cummings said.

Of hundreds of Tesla driver-assistance crashes, NHTSA has focused on about 40 incidents for further analysis, hoping to gain deeper insight into how the technology operates. Among them was the North Carolina crash involving Mitchell, the student disembarking from the school bus.

Afterward, Mitchell awoke in the hospital with no recollection of what happened. He still doesn’t grasp the seriousness of it, his aunt said. His memory problems are hampering him as he tries to catch up in school. Local outlet WRAL reported that the impact of the crash shattered the Tesla’s windshield.

The Tesla driver, Howard G. Yee, was charged with multiple offenses in the crash, including reckless driving, passing a stopped school bus and striking a person, a Class I felony, according to North Carolina State Highway Patrol Sgt. Marcus Bethea.

Authorities said Yee had fixed weights to the steering wheel to trick Autopilot into registering the presence of a driver’s hands: Autopilot disables the functions if steering pressure is not applied after an extended amount of time. Yee directed a reporter to his attorney, who did not respond to The Post’s request for comment.

NHTSA is still investigating the crash, and an agency spokeswoman declined to offer further details, citing the ongoing investigation. Tesla asked the agency to exclude the company’s summary of the incident from public view, saying it “may contain confidential business information.”

Lynch said her family has kept Yee in their thoughts and regards his actions as a mistake prompted by excessive trust in the technology, what experts call “automation complacency.”

“We don’t want his life to be ruined over this stupid accident,” she said.

But when asked about Musk, Lynch had sharper words.

“I think they need to ban automated driving,” she said. “I think it should be banned.”

Copyright 2025 Leather & Lace MC - All Rights Reserved.